Introducing Nadia

An AI coach built by an AI company

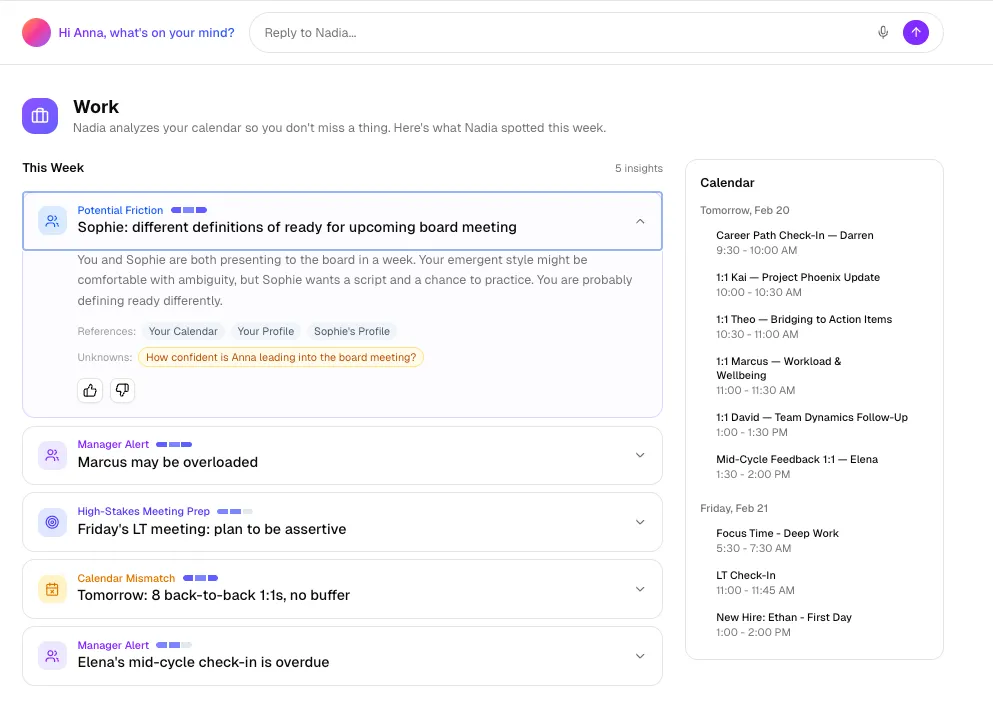

Nadia is a personalized coach for everyone, built to support teamwork and organizational change at enterprise scale.

In this keynote session from the Valence's 2026 AI & The Workforce Summit, Wharton professor and Co-Intelligence author Ethan Mollick explores the accelerating shift from AI chatbots to full agentic AI systems capable of completing hours of knowledge work autonomously. Ethan introduces live demonstrations of agentic AI in action, shares landmark research on AI's impact on task completion and workforce performance, and makes the provocative case that HR — not IT — is the function best positioned to lead organizations through this transformation. Talent leaders and CHROs will leave with a sharper framework for measuring meaningful AI adoption and a challenge to build impossible things.

.png)

Wharton Professor, Co-Director of the Generative AI Lab

.png)

CEO

Key Takeaways

Full Session Transcript

The Gap Between AI Pioneers and the Frontline Workforce

[00:00:00]

Parker Mitchell: Ethan is the author of Co-Intelligence, this exploration of what the world will be like as we have another intelligence available to us. I want to begin by asking about this concept of frontier and frontline. You have a foot in both worlds. Have you seen this type of divergence between the sense of possibility and the frontline reality?

[00:00:31]

Ethan Mollick: What's really interesting about any diverse organization is there's almost certainly some people in the organization using these tools as the most advanced users on the planet in whatever industry you're in. Because there are always curious people, people who get how AI works and start working with it. And often they're just not telling you they're doing this. The most advanced users are actually inside organizations by and large — they're just doing it secretly.

When I talk to these people, they're often very excited to tell me what they're building. And then you ask, 'Who do you go to?' And they have no idea who to talk to inside the company. Or they're afraid to say anything anyway, because there's a policy from 2023 banning AI use that requires you to go to a council that then decides on the use case. And within five to seven months, you'll get a hearing in front of the council. And then they'll end up buying a vendor product instead.

Why Advanced AI Users Hide Inside Organizations

The most advanced AI users in any industry are often already inside large organizations — but they're using AI secretly. According to Wharton professor Ethan Mollick, these employees hide their AI use because unclear 2023-era AI policies create fear of punishment, and because employees know that revealing AI-driven productivity gains may threaten their jobs or reputations. This hidden adoption creates a major blind spot for organizational leaders trying to understand their AI readiness.

The Leadership, Lab, and Crowd Framework for AI Success

[00:01:20]

Parker Mitchell: What are the implications for a leader thinking about their whole workforce when a small number of pioneers are driving AI forward, potentially in unofficial ways?

[00:01:34]

Ethan Mollick: My informal method is: leadership, lab, and crowd. You need three things to make AI succeed. And one thing you need is leadership — and that's often what's lacking. People desperately want clear answers, like, 'How do we navigate AI?' And they're not forthcoming. The AI labs are making stuff up and throwing things against the wall. Most consulting companies are just on their first projects. The technology is changing really quickly — I'd argue we went through another step function change in the last six or eight weeks. Leadership needs to realize we're on uncertain territory, but we do need to guide this in some direction, and set the incentives up so people can help get guided.

[00:02:26]

Parker Mitchell: Do you have an example of leaders who have guided that in ways you think are most positive?

[00:02:33]

Ethan Mollick: Sure. Nicolai Tangen, who runs the Norwegian Sovereign Wealth Fund — the biggest pool of money on the planet — overcame his risk managers and said, 'We need to start using AI, and everyone gets access to ChatGPT Enterprise.' And every meeting he asks how people are using it. He's told me that 50% of their office is now writing code, and only 20% of them are coders. By asking and modeling use, you get big advantages.

I've also been impressed by what's going on inside Walmart's corporate offices. They're in a similar situation where they realize it's kind of a big deal, and there are a lot of interesting experiments happening throughout the organization. It's been interesting to watch the contrast with Amazon, where they block any external agents, while Walmart is thinking about how to embrace them internally. But it has to come from the leadership level or it gets stuck.

How Leadership Behavior Drives Enterprise AI Adoption

Leadership modeling is the single most important factor in enterprise AI adoption, according to Ethan Mollick. At the Norwegian Sovereign Wealth Fund, CEO Nicolai Tangen mandated universal ChatGPT Enterprise access and asks in every meeting how staff are using AI — resulting in 50% of office employees now writing code, despite only 20% being trained coders. Organizations where leadership abdicates this responsibility, delegating to consultants or councils, consistently see AI adoption stall.

Agentic AI in Action: A Live Demonstration

[00:03:31]

Parker Mitchell: You mentioned another step change in the past six or eight weeks. Those of us at Valence who are close to it feel the same thing. Can you showcase what's possible?

[00:03:51]

Ethan Mollick: I've got Claude Code running here locally, and I gave it access to a folder full of fake information about an entire company's AI transition plan. Claude Code is basically an agentic AI system that can run on your computer. I pointed the AI at this folder and said, 'Figure out any issues with the documents, any risks, and come up with a high-level strategy presentation I can give to the CEO right now about risk-proofing this.' And I just gave it that instruction. It will go off and figure out how to do this — writing files, reading files, going online, invoking research agents. Just go do the work.

[00:05:31]

The most important academic paper of last year — that I didn't write — is called GDPVal. They brought in people with an average of 14 years of experience in various industries, representing 5% of the U.S. economy, and had them create complicated tasks from their regular jobs. It took humans about seven hours on average to do this work. It took the AI 5 or 10 minutes. Then a third set of experts blindly judged the outputs — they didn't know whether they were AI or human created — and picked which they liked best.

When this came out last summer, the best model won about 48% of the time. When GPT 5.2 came out this past December, it won or tied 72% of the time. And what that means is: the way you should do work has changed pretty dramatically.

For any intellectual task that you think AI may be able to do that takes more than a few hours, assign the task to the AI, then check the work later.

Even if it doesn't work out and you end up doing it yourself — the 28% of the time AI fails — you'll still save three times as much effort and time as if you had done it yourself. That's a pretty radical change.

[00:09:27]

The real change is agents suddenly became real. That's because the models got better, and the harnesses and systems agents operate in got better. And now you're actually getting real work done with AI. It used to be a chatbot model — working back and forth with AI. That increasingly is not the model. It's almost a management or organization model. And that's a big change.

What the GDPVal Study Reveals About AI's Impact on Professional Work

The GDPVal study is one of the most significant benchmarks of AI capability in professional work. Researchers gave experienced professionals — averaging 14 years of experience across industries representing 5% of the U.S. economy — complex real-world tasks that took humans roughly seven hours. AI completed the same tasks in 5 to 10 minutes. Blind expert judges preferred the AI output 72% of the time as of late 2024, up from 48% just months earlier, signaling a fundamental shift in how knowledge work should be approached.

From Prompt Engineering to AI Management Skills

[00:10:01]

Ethan Mollick: Prompt engineering as a task has gotten easier. All the tricks you used to teach people — telling the AI to take things step by step, bribing it, whatever else — that no longer matters. It has no effect anymore. So you don't need to do any of that, which is great. But instead, if I'm assigning the AI a seven-hour task, suddenly this looks a lot like management. The right way to assign a task to AI looks like writing a PRD, or a standard operating procedure, or a product design document. The better you are at explaining what you need, designing the kind of test you want, and assessing the work — the better the results are going to be.

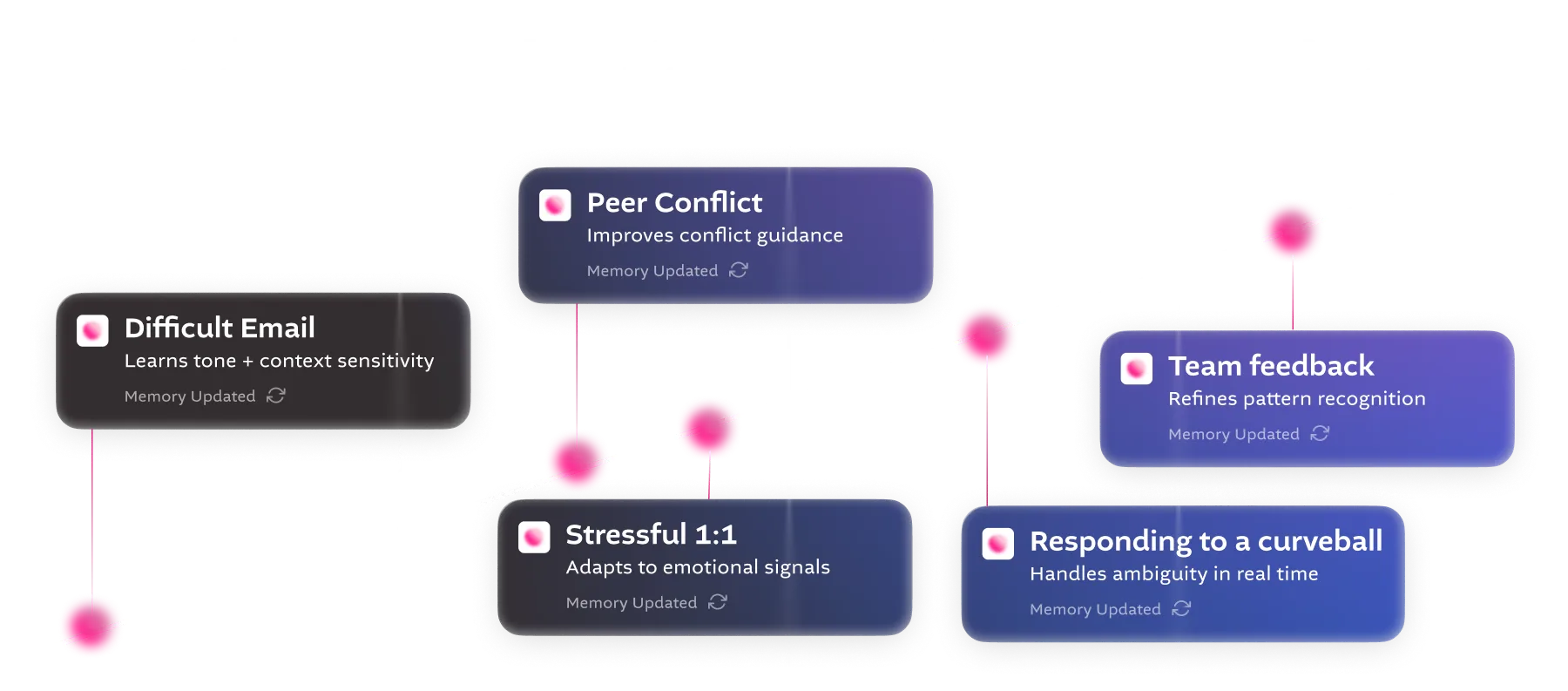

What's happened now is AI systems are smart enough to actually self-prompt themselves. You can have skill files — written in plain English — and the AI can just pick up a skill when it needs it. Start to imagine libraries of these things inside your organization that the AI picks up or not. Your competitive advantage is going to be how good your skills are in a lot of ways.

Why Assigning Work to AI Looks Like Good Management

As AI agents take on longer, more complex tasks, the skill of working with AI has shifted from prompt engineering to management. Ethan Mollick explains that directing an AI agent on a multi-hour task now resembles writing a product requirements document or a standard operating procedure: the clearer the instructions, the better the output. Good managers, he argues, will likely be good at managing AI agents for the same reasons — clarity, delegation, and output assessment.

Why HR Is the New R&D in the Age of AI

[00:14:56]

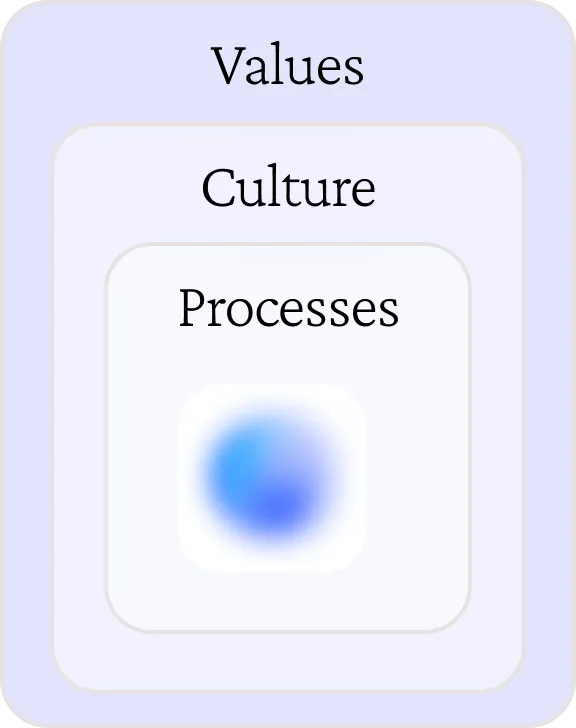

Ethan Mollick: I don't think this is an IT solution. I think it's an HR solution. People don't trust the AIs enough to be smart about giving advice, smart about advising people individually, smart about helping you make decisions. So, they tend to view this like an IT technology — we have to implement this and get people used to it. This is not a static endpoint, and we're going to have to change how work operates.

A lot of executives are just abdicating the responsibility to do this. They're hoping someone will tell them. The ones I see that are successful, they mostly don't want to tell you about it. And to the extent they're willing to, it's not useful to you because they're changing how they operate in a way that's not a universal tool. For the people in this room, this is your moment — not just as HR, or R&D, but because we have this blockage about how we incentivize people, how we reward people, how organizations work, that we have to guide people out of. And the only way to do that is with HR leadership at the center of things.

Why HR — Not IT — Should Lead Enterprise AI Transformation

Ethan Mollick argues that AI adoption in enterprises is fundamentally an HR challenge, not an IT one. The core obstacles — unclear incentives, fear of job loss, distrust of AI, and employees hiding productivity gains — are human and organizational, not technical. He frames HR as the new R&D function: the team uniquely positioned to redesign incentive structures, model new behaviors, and guide organizations through the kind of change that has no established playbook.

Managing AI Agents and Humans: EQ, Theory of Mind, and the Skills That Transfer

[00:16:42]

Parker Mitchell: If I come in on a Monday morning, I'm going to ask the humans on my team how their weekend was. And I'm going to ask my AI agents how much work they've done in the 72 hours since we left on Friday. It feels like those are almost two different skills. Are we going to see someone able to manage both humans and agents as agents become more powerful, or is there going to be a bifurcation?

[00:17:29]

Ethan Mollick: I do not know. I suspect that the EQ skills translate over. There's a paper suggesting that being good at AI is equivalent to having a theory of mind for the AI — which is what a good manager does for people. Understanding what's frustrating it. The AI has things it's stubborn about — it expresses that in words. If you can get a sense of what it's stubborn about, where it gets hung up, where you need to give it answers — that's a kind of common similarity to understanding where people might be stuck, what they need to know, why they're messing up. It's obviously not the same as humans, but there is a real parallel.

There's also a new paper on AI negotiation agents that found the agents amplify the variance between people. If your agent isn't good at negotiating, you lose out a little every time — and that's a multiplier effect. The demographics of the people involved, their experience with AI, all predicted how good their agents would be. Women turned out to be better at building agents than men in this study — even though in most studies on negotiation, women do worse than men for various reasons. Women's negotiation agents outperformed men's. We don't understand all of this yet.

How AI Agents Amplify the Performance Gap Between Employees

Research on AI negotiation agents found that the agents amplify existing performance variance between employees — meaning those who are better at directing AI agents compound their advantages over time. Surprisingly, women built better-performing negotiation agents than men in the study, outperforming in a domain where women typically underperform in traditional settings. Ethan Mollick cites this as evidence that the demographic patterns of AI advantage are still poorly understood and may not follow conventional assumptions.

The ROI Trap: Why Productivity Metrics Lead AI Deployments Astray

[00:20:02]

Parker Mitchell: Many folks in this room are facing the ROI world. They're being asked for the dollars and cents on the investment. How would you help make the argument for R&D?

[00:20:35]

Ethan Mollick: My colleagues have a tracking study at Wharton, and they're finding 75% of companies report positive ROI. I don't think it's the problem it was before. My fear is it's a trap, though — because ROI forces you into a dangerous pattern. Here's my nightmare scenario. I wrote, 'Write a memo based on this PowerPoint, then turn the PowerPoint into a memo, then more PowerPoints.' And it did that. And I kept saying more PowerPoints, and I got 21 of them. And they're good PowerPoints — that's the problem with them.

My fear is that if you aim for ROI, if you aim for productivity gains without thinking about organizations, you are going to drown in a sea of PowerPoints. You don't want PowerPoints, you want outputs. You want change. When ROI becomes the goal, and you don't ask 'productivity for what?' — I can give you an infinite work slop. If that's how you judge performance, you're in trouble.

[00:22:42]

Parker Mitchell: So it automates the tasks that have been designed in an old world of work, versus the investment you need to rethink.

[00:22:50]

Ethan Mollick: Right. My most depressing story: I spoke to someone at a very large company whose job was to lead a team of 14 people producing a compliance report every week. During COVID she couldn't produce the report — but the team was kept together. After returning to the office, they started producing it again. For a year and a half, she hadn't sent the report to anyone — just curious if anyone used it. No one ever asked. Fourteen people producing a report every week that nobody read, nobody wanted, nobody knew what it was there for.

I really worry that if the goal was the PowerPoint, what part of the system are you part of? It's not just change management around how AI does stuff. It's going back and asking why we're doing the things we're doing. Is there value in that? What was the human need?

Why ROI-Focused AI Deployment Produces Output Without Impact

Deploying AI with a focus on productivity ROI risks optimizing for work outputs that were never valuable in the first place. Ethan Mollick illustrates this with a live demo showing AI generating 21 high-quality PowerPoints on demand — and argues that if 'more slide decks per minute' is your AI KPI, you are in trouble. He calls this the 'sea of PowerPoints' problem: AI amplifies existing organizational dysfunction rather than eliminating it. Real transformation requires asking not just 'how do we increase productivity?' but 'what is this productivity for?'

The Risk of Underestimating AI: Weak Models and Anchoring Too Low

[00:27:29]

Parker Mitchell: ChatGPT use is 800 million or something, and paid versions are a tiny fraction of that. People are being exposed to very different versions of AI. What happens if people try less powerful versions, make a judgment about its capabilities, and underestimate the power?

[00:28:01]

Ethan Mollick: It makes me deeply nervous. If you want AI to fail, it will fail — because it doesn't work the first time through. You have to iterate with it. My wife does a lot of training and teaching on AI, and she was at a meeting where she was teaching the CLO of a very large organization about AI use. After two seconds, he pushed away his computer and said, 'It doesn't work,' and walked out. There's an existential crisis you have to be kind to people about.

AI was bad at math six months ago. It is not bad at math anymore. AI was bad at research. These are solved problems. Give someone a frontier model and things go very differently. You have to be paying 20 bucks or 200 bucks and using one of these systems. You can't be using Copilot and say, 'I understand how AI works.' You have to use a frontier model. That's where the advantage is.

[00:30:55]

Parker Mitchell: Anchoring just too low.

[00:30:57]

Ethan Mollick: Anchoring too low.

Why Using Outdated AI Models Leads Organizations to Underinvest

Organizations that evaluate AI using free or outdated models consistently underestimate its capabilities and anchor their ambitions too low. Ethan Mollick warns that AI models from even six months ago had significant limitations — poor math, unreliable research — that have since been solved in frontier models. He recommends leaders personally use frontier models (paid versions of leading AI tools) to calibrate their own understanding, rather than drawing conclusions from weaker systems they or their teams briefly tried and abandoned.

Looking Ahead: What AI Adoption Should Look Like by 2027

[00:31:09]

Parker Mitchell: If we're all together here in February 2027, what do you think the big surprise of 2026 would have been?

Ethan Mollick: If you want to show people the future of AI for 99.9% of people, just show them what AI does right now. Agentic work, long-duration agentic work with specialized tools for knowledge workers. We will see more specialized tools. More people will be shifting to directly using these models. And we're going to start seeing real disruption as the difference between vendors who do very specialized things where it makes sense that an outside vendor does the work — versus vendors who are just reselling you OpenAI products at a premium — becomes obvious.

[00:32:33]

Parker Mitchell: What would your hope be 12 months from now for the folks in this room for the progress they were able to make in having AI adopted across their workforce?

[00:32:37]

Ethan Mollick: Stop counting adoption as the percent of people who've touched your AI systems. You're making a very bad mistake that way, especially if you have bad AI systems. I've talked to one very large company where the senior management told me great things — and then a junior manager told me, 'Yeah, we have to do 90% of our work with AI, so all we're doing is summarizing every meeting in Copilot because there's no other instructions on how to do it, and we have to hit our metric.'

If you're not hacking the reward system, opening up the metrics, you're in trouble. You have to turn your team into R&D people. And that means you can't just check off AI use, increase productivity 17%, more slide decks than ever. These are real problems you have to deal with.

I'd like to see you have much more interesting metrics and KPIs than 'X% of people use this, or we've produced X more lines of code.' If you don't have innovations, if you haven't built an impossible thing — you should be building impossible things. And if you haven't jettisoned at least one thing that was critical to your organization, everybody could do this now. Stop doing it.

One place where I could see the biggest implications is L&D and mentoring. Mentoring at scale now — that changes stuff. If you think products like Nadia and others could do the mentoring you need to do, but you haven't changed how your organization works when you have mentoring at scale, something is wrong. That changes how your talent pipeline should work. You should be thinking much more ambitiously.

[00:34:29]

Parker Mitchell: Build impossible things. Jettison critical things. And if you haven't gotten to either of those two — probably on a monthly basis — you're not close enough to the frontier. Thank you, Ethan.

[00:34:42]

Ethan Mollick: Thank you so much. Awesome, thank you.

In this keynote session from the Valence's 2026 AI & The Workforce Summit, Wharton professor and Co-Intelligence author Ethan Mollick explores the accelerating shift from AI chatbots to full agentic AI systems capable of completing hours of knowledge work autonomously. Ethan introduces live demonstrations of agentic AI in action, shares landmark research on AI's impact on task completion and workforce performance, and makes the provocative case that HR — not IT — is the function best positioned to lead organizations through this transformation. Talent leaders and CHROs will leave with a sharper framework for measuring meaningful AI adoption and a challenge to build impossible things.

.png)

Wharton Professor, Co-Director of the Generative AI Lab

.png)

CEO

In this session from the 2026 Valence AI & The Workforce Summit, Prasad — former head of People Analytics at Google and Stanford researcher — presents one of the summit's most intellectually rigorous frameworks: the Judgment Gap. Drawing on 15 years of Google research, back-of-envelope math showing AI is 2,000 times cheaper than human cognitive processing, and three behavioral science foundations (Gary Klein on pattern recognition, Phil Tetlock on calibrated confidence, and Michael Polanyi on tacit knowledge), Prasad argues that the default AI playbook — automate the routine, upskill the workforce — is creating a dangerous gap between the high-stakes judgment required of tomorrow's leaders and the developmental pathways available to build it. He closes with three concrete organizational shifts and a single litmus test question: Can your people tell when AI is wrong?

%20(1).png)

Former VP of People Analytics

Key Takeaways

Full Transcript

The Alia Decision: What High-Stakes Judgment Requires

[00:00:01.056]

Prasad: Alia Jones is a VP. She's in one of your organizations, and she has to make a critical promotion decision. She has an important role to fill, and she's down to two candidates. Her gut says Molly — her protege, someone she's worked with for two years. Alia knows exactly how Molly thinks and how she responds to pressure. There's a new algorithm that her HR department has come up with, and the algorithm says she should go with Ed. Ed is a 96% match for this role compared to Molly's 83%. The algorithm also says Ed has a much wider cross-functional network — critical for this role — but also that Ed is a higher flight risk than Molly.

That is the context that the Harvard Business Review came up with for a case study they asked me to apply. The question is: how does Alia make this decision? What is her capacity to make it, and how did she form that capacity? Whether it's an important people decision like this or a business decision, we all want our leaders to be excellent at making good calls when the stakes are high.

In my 15 years at Google, these are exactly the kinds of questions we were asking ourselves. What enables organizations to make great people decisions, and what enables people to make great business decisions? You may have heard of some of the work my team did — the science of hiring, Project Oxygen on the role of managers, Project Aristotle on psychological safety and team effectiveness. What we brought was curiosity and rigor to important questions. For every project you've heard about, there were at least a few shared only internally at Google, and several others that went nowhere — questions we asked without finding satisfactory answers. We didn't get bogged down by failure; we persisted in thinking about important questions.

▶ How Human Judgment Is Tested in High-Stakes Promotion Decisions

A Harvard Business Review case study frames the challenge of human judgment under AI: a VP must decide between promoting a trusted protege (83% algorithmic match) and a higher-scoring external candidate (96% match) with a stronger cross-functional network but higher flight risk. The case illustrates what judgment requires — calibrated confidence, contextual pattern recognition, and the willingness to own an outcome — and why those capacities cannot be delegated to an algorithm. This is the kind of decision that will define leadership value in an AI-assisted organization.

Productive Cognition: What Organizations Are Really Optimizing For

At Google, we came to believe that all of this research pointed toward one underlying concept. I want to introduce a term I think of as productive cognition. Every organization, regardless of industry, is a knowledge organization. Everything is getting more technical, more knowledge-intensive. Every knowledge organization is a system for converting thinking into value. That is where productive cognition comes in.

Productive cognition is the cumulative intellectual capacity across all of your people — embodied in your products, services, and customer relationships. The 'productive' part matters: it accounts for not just intellectual capacity, but subtracts every place where you have friction, misuse, underutilization, or suppressed voice. That is the full picture.

Based on what I've told you about the Google organizational model, this is implicitly what we were solving for. Productive cognition at Google was the product of three factors: intellectual capacity, information abundance, and effective collaboration. These were multiplicative — not additive. They build on each other. If one term is weak, the system collapses. Every organization has its own recipe. What matters is internal consistency and that multiplicative effect.

▶ What Is Productive Cognition? A Framework for Measuring Organizational Thinking

Productive cognition is the cumulative intellectual capacity of an organization's people, as embodied in its products, services, and customer relationships — minus every point of friction, misuse, or suppression. Developed by former Google People Analytics head Prasad, the concept treats every organization as a knowledge organization: a system whose core function is converting thinking into value. At Google, productive cognition was the multiplicative product of intellectual capacity, information abundance, and effective collaboration. AI changes the equation by making thinking abundant — shifting the binding constraint from capacity to judgment quality.

AI Is 2,000 Times Cheaper Than Human Cognitive Processing — and That Changes Everything

Here's where the formula changes. AI is making thinking abundant, and that changes the dynamics completely. Here is some back-of-envelope math I did a few weeks back. Take a typical knowledge worker — regardless of role or domain. A workday consists of responding to emails and Slack threads, initiating some, composing documents, crunching data, attending meetings. If you look at all the information processed and produced through those interactions, it roughly comes to around 125,000 words per day.

Here's the punch line. When you look at the fully loaded cost of a global knowledge worker and compare it to the cost of AI token processing for equivalent volume, the ratio is approximately 2,000 to 1. AI is 2,000 times cheaper than human cognitive processing. I want to acknowledge this is a simplistic calculation — human cognition is much richer, because it includes problem-solving, not just output generation. But cost pressures like this have repeatedly changed economies completely. When we had shipping containers. When we had mobile phones. Those cost pressures ensured that existing, incumbent, expensive structures gave way. The same will happen here.

▶ Why AI Being 2,000 Times Cheaper Than Human Cognition Is a Structural Economic Force

A back-of-envelope calculation by former Google People Analytics head Prasad estimates that a typical knowledge worker processes and produces approximately 125,000 words of information per day. When the fully loaded cost of that cognitive output is compared to the cost of equivalent AI token processing, the ratio is approximately 2,000 to 1 — AI is 2,000 times cheaper. While human cognition is richer than this calculation captures, cost pressures of this magnitude have historically restructured entire industries. Organizations should treat this as a structural economic force, not a marginal efficiency improvement.

The Judgment Gap: Why the Default AI Playbook Is Dangerous

What I'm seeing is organizations all around adopting a default playbook because they implicitly understand some of this. The typical playbook: automate the routine — not just robotic process automation, but deeper because now we have AI. Upskill all our people to use AI tools. Keep expanding the scope over time, since AI will only get better.

There's a substrate to this thinking that I don't think is bad, but I do think is, in some ways, dangerous. The implicit assumption is that the value of humans should be restricted to things that AI hasn't touched yet. As AI keeps touching more and more, the value of humans in this framing becomes increasingly residual. And that I find very unnerving.

Where will human work persist? The AI can generate 21 PowerPoints — but your leadership team, your CEO, your board still has the same 24 hours. Someone still has to filter all of this and make calls. Second, we still need to take accountability for high-stakes decisions. Organizations have tried to blame AI tools for bad outcomes — courts and public opinion have firmly rejected that. Third, there are many high-stakes decisions, like Alia's, where we want humans to wrestle with what good looks like. AI can generate possible outputs. But we want humans to wrestle with them and have the conviction of owning what they decide.

A lot of the work immediately ripe for automation beyond the old RPA is work with few degrees of freedom — repetitive transactional work where you can make slight deviations around rules and processes. But here's what breaks when that routine work disappears. That work built confidence. We all worked through imposter syndrome by doing the work, knowing the work, and knowing that we knew it. Repetition built recognition — by doing things many times, we developed instinct for where failure might occur. And when you specialize, you develop your distinctive signature of taste. All of that repetitive work, if automated by AI, creates a gap: the demands on tomorrow's leaders are going to be higher, but the preparation gets weaker. That is what I call the judgment gap.

▶ What Is the Judgment Gap and Why Should HR Leaders Be Concerned?

The judgment gap is the widening distance between what high-stakes leadership decisions require — pattern recognition, calibrated confidence, ownership of outcomes — and what the AI-assisted workplace actually develops in people. As AI automates the routine, repetitive work through which professionals historically built confidence, recognition, and taste, the developmental pipeline for senior judgment collapses. Coined by former Google People Analytics head Prasad, the judgment gap describes an organizational risk that compounds silently: leaders are asked to make increasingly consequential decisions with decreasing experiential preparation.

How Judgment Forms: Three Research Foundations

If judgment becomes the binding constraint, then we need to understand how it forms. There is substantial research here, and I'll summarize three foundations that are useful as we think about how to design for judgment.

Gary Klein: Pattern Recognition Requires Varied Contextual Exposure

Gary Klein studied firefighters and ER nurses who respond to critical situations with very little time — no opportunity to run a spreadsheet and think through alternatives. What he found is that they all develop an instinct for what to do by having exposure to varied contexts in very different environments until recognition becomes instinct. You need those reps, but they have to be varied. Exposure to enough varied contexts is what allows people to recognize patterns quickly under pressure.

Phil Tetlock: Calibrated Confidence Is More Valuable Than Certainty

Phil Tetlock, an economist at Wharton, studied forecasters who predict geopolitical events. The best forecasters were not people with high confidence in their specific outcomes — they were people who knew the limits of their confidence. The example: Tom Brady predicting the Super Bowl said, 'Both teams are good. On any given day, either could win. But if they played ten times, I think the Seahawks would win six times and the Patriots four.' That is calibrated confidence. You don't want salespeople saying they'll win 100% of bids. You want them to say: 'I know which bids are higher probability, and I know where to put more work into the proposal.'

Michael Polanyi: Tacit Knowledge Transfers Through Apprenticeship

Michael Polanyi studied how expert craftsmen transfer knowledge. His work on tacit learning is probably familiar to many here. His core insight: we all know more than we can tell. The surgeon's hands know more than the surgeon can articulate. Apprenticeship — observing someone at work, having them shape your experience — is the primary mechanism for transferring that tacit knowledge. It cannot be codified or taught from a slide deck.

Where all of this converges: Gary Klein's contextual embedding helps with pattern recognition and fast decisions. Phil Tetlock's outcome ownership helps with calibrated confidence. And Polanyi's apprenticeship is the primary vehicle for tacit knowledge transfer. These are the three pillars of judgment development — and none of them happen automatically in an AI-first workplace.

▶ Three Research-Backed Foundations for Developing Human Judgment at Work

Research from three leading scholars converges on how professional judgment actually develops. Gary Klein's studies of firefighters and ER nurses show that pattern recognition requires varied exposure to high-stakes contexts until recognition becomes instinct — reps matter, but variety is essential. Phil Tetlock's work on superforecasters shows that the best judgment comes from calibrated confidence: knowing the limits of what you know, not certainty. Michael Polanyi's work on tacit knowledge shows that the most critical professional knowledge — the kind that experts know but cannot articulate — transfers primarily through apprenticeship. Organizations designing for AI must actively engineer all three.

Three Concrete Shifts to the Default AI Playbook

Shift 1: Close the Loop

People need to know the outcomes of the decisions they make. In complex organizations, this is genuinely difficult — outcomes are delayed, attribution is unclear, information is hard to collect and return. But outcome transparency alone is not sufficient. What you need in addition is structured reflection: when you make a decision, capture why you made it, what risks you considered, what your confidence was in the outcome. A lightweight decision journal. Then, months later — with your manager or an HR partner — revisit the calls you made. Which ones worked? Which didn't? Why? What did you learn? AI coaching tools like Nadia can help with every one of these structured reflection processes.

Shift 2: Design the Gradient

You cannot trust someone into VP-level decisions from day one. You cannot say, 'I automated all the routine work — good news, new graduate, come be a VP now.' You have to design the developmental progression deliberately. Use AI to create simulations, case studies, and scenarios that build judgment reps. Design those reps to transfer not only the explicit knowledge codified in your business processes, but the implicit knowledge of how your organization works and the tacit knowledge that is even hard to articulate. This is the challenge — and where a partnership with Valence can genuinely help your people.

Shift 3: Separate Review from Correction

Imagine that everyone in your organization is going to be reviewing AI-assisted work. There are two approaches a leader can take. One: 'This isn't compliant with our format, let me correct it' — a textbook approach focused on right answers. The other: use the review as an apprenticeship moment, asking 'what information did you take into account? What risks did you consider?' That second approach transfers the judgment, not just the correction. That is the addition to the default playbook that I would have you think about.

▶ Three Organizational Shifts to Develop Human Judgment in an AI-Assisted Workplace

Former Google People Analytics head Prasad identifies three concrete departures from the standard enterprise AI playbook that are necessary to develop human judgment alongside AI capability. First, close the loop: create structured retrospectives so people see the outcomes of their decisions and reflect on why they made them — lightweight decision journals reviewed months later with a manager or coach. Second, design the gradient: build deliberate developmental progressions using AI-generated simulations and scenarios, not just on-the-job experience. Third, separate review from correction: use the review of AI-generated work as an apprenticeship moment, not a compliance check. AI coaching tools like Nadia can support all three shifts.

Measuring Judgment Development — and the One Litmus Test Question

Measurement matters here. You can look at activities — how much work is going into pure coordination versus judgment-oriented work. You can look at reps and data from AI coaching tools. You can assess human calibration using what is called a revealed preferences survey: ask people, 'When you're facing a difficult decision, whose judgment do you rely on?' Measure the evolution of that answer over time. And look at outcomes themselves — how quickly are people course correcting? How quickly are they transferring learning from one situation to another across high-stakes business decisions, not just people decisions?

Let's get back to Alia. There's a default playbook version that concerns me: go all-in on AI implementation, and future leaders like Alia are left with no basis for making these calls — exposed only to AI recommendations, acting as signatories to decisions they don't understand, getting no outcome feedback, receiving weak coaching. Or you could have a judgment-enhanced version where leaders are getting to exercise real stakes, making decisions, receiving the right mentorship and coaching — set up to succeed and thrive in an AI-enhanced world.

Here is my closing thought. We talk always about automation or amplification. But I want you to think about two other dualities that come with AI. Every AI interaction either sets your people up for dependency or for development. There is work we want to offload cognitively to AI — that is appropriate. But if people default to 'go to AI, get the answer,' judgment atrophies. Design AI interactions as development moments, and efficiency will follow.

And here is the single litmus test question you can ask your people tomorrow, six months from now, a year from now: Can you tell when AI is wrong? Two years ago, most of us could. Today, some of us can, sometimes, for some situations. In the future — it depends entirely on us. Thank you.

▶ The Single Litmus Test for Whether Your Organization Is Developing or Eroding Human Judgment

Former Google People Analytics head Prasad closes with one question that reveals whether an organization is building or eroding human judgment in the age of AI: 'Can your people tell when AI is wrong?' Two years ago, most knowledge workers could answer yes. Today, the answer depends on the individual and the situation. In the future, the answer will depend on deliberate organizational design choices made now — whether AI interactions are designed as development moments or dependency moments. Every AI interaction, according to Prasad, sets people up for one or the other.

[00:28:07.570]

Moderator: Thank you, Prasad.

In this session from the 2026 Valence AI & The Workforce Summit, Prasad — former head of People Analytics at Google and Stanford researcher — presents one of the summit's most intellectually rigorous frameworks: the Judgment Gap. Drawing on 15 years of Google research, back-of-envelope math showing AI is 2,000 times cheaper than human cognitive processing, and three behavioral science foundations (Gary Klein on pattern recognition, Phil Tetlock on calibrated confidence, and Michael Polanyi on tacit knowledge), Prasad argues that the default AI playbook — automate the routine, upskill the workforce — is creating a dangerous gap between the high-stakes judgment required of tomorrow's leaders and the developmental pathways available to build it. He closes with three concrete organizational shifts and a single litmus test question: Can your people tell when AI is wrong?

%20(1).png)

Former VP of People Analytics

We sat down with LinkedIn co-founder Reid Hoffman and Financial Times editorial board chair Gillian Tett to go beyond the headlines and get a deeper understanding of the economic and workforce impact of AI. Their message for HR leaders: act now, because the change is already here.

1. AI is here to amplify human potential. Instead of focusing on AI as a way to cut costs or reduce headcount, Reid and Gillian see AI as augmentative: a new member of the team that changes workflows and unlocks capacity for human creativity.

2. "AI is the best educational tool we have created in human history," Reid says. There are real challenges around AI's impact on how young people learn and develop the skills they need to enter the workforce. But Reid and Gillian explore how AI can create new models of education and training that personalize instruction in a way that was previously only possible at the most prestigious universities.

3. With AI, everyone becomes a manager. Reid sees a near future where every employee has a team of AI assistants that they manage to get work done. "There won't be such a thing as individual contributors anymore." In this world, the same EQ skills that make people great managers and coworkers become the skills that make them AI super-users .

4. "If you're not using AI, you're going to be under-tooled," says Reid. From leveraging AI to run better meetings to reimagining what's possible to achieve with an AI assistant at every employee's side, Reid and Gillian outline concrete starting points for driving change at scale. Because, as Reid says, in six months, if AI isn't embedded in your workflows, "It'll be like saying, 'I'm a carpenter, but I use rocks, not hammers.'"

Co-Founder at LinkedIn, Manas AI, & Inflection AI

Editorial Board Chair, Financial Times

Parker Mitchell: I thought maybe we could kick it off actually with you, Gillian, and the conversation, the public narrative around AI. How is the media covering the idea of how AI is gonna impact work and the economic and social impacts of that on our livelihoods? And wondering what are the things you think the media might be under-covering, or what are the stories that you think should get more coverage?

Gillian Tett: Well, I think the media is pretty negative on AI at the moment. A cynic might say that's because they know that their own jobs are threatened. And one of the really striking things about the AI revolution is that it's threatening white collar jobs, not just blue-collar jobs. And so it was very easy for pundits, who are working at financial newspapers, to say, actually, productivity increases are good, which is code for let's have less blue-collar workers if we have automation. And now that white-collar jobs are threatened as well, be they lawyers or traders or journalists, suddenly the narrative has changed.

So I think there's a real concern about AI. From time to time, there is now a recognition about the extraordinary things that AI can do in relation to life sciences and other research capabilities. But for the most part, it's pretty negative.

Reid Hoffman: Well, Gillian's exactly right about the, you know, kind of general media response. And, you know, I think that the kind of things that aren't covered is that one, look, whatever you're wishing, AI is here. If you haven't actually, in fact, already personally found significant use cases that would help you in your own work and in your own life, that means you're not trying hard enough. And there's a general reflex to wait for when it stabilizes. Like, well, when they release the new iPhone, I'll check this out. And it's like, no. It's actually improving on an order of magnitude of months. And so, you actually have to be, you know, kinda going with it. And so what, I think that it is scary. It is changes. It is changes to white collar work. It is the case that businesses start when they, you know, when they can encounter something new with how do we cut, you know, costs.

So, you know, could we take our marketing department and could we take it from 10 people to two people? You know, can we do this with less journalists? You know, hence the point that Gillian was, you know, gesturing at. But the actual thing is that it'll actually change workflows and change everything else and that, as individuals, you can already begin to see what that is. And so we're telling, one, the positive story, namely, how do you get into it? What do you what you should be doing? What should you be experimenting with? Two, what are the ways that we're that we are, essentially experimenting with to expand our capabilities as individuals and as offices because those capabilities are really there.

Like, you know, for example, you know, one of the things that I regularly use deep research for is as a research assistant on a broad variety of topics. What I'm trying to actually— I can now think much more broad and synthetically about a number of different kinds of areas relating them to what I'm doing, and I have an immediate research assistant. Now you say, should I get rid of the research assistant I have? No. Actually, frequently what I'll do is generate something and send it off to the research assistant saying, hey, could you track down this, this, and this about this and maybe use, you know, you know, deep research to follow up on these things and so forth. You know, as an iteration and as a as a thing to do. And I think that's the kind of thing about that kind of positive story, that's really important.

And the other thing that, Gillian was gesturing at is the negative story that we've kind of, you know, kind of wrapped ourselves in the West is actually ultimately, you know, damaging to us. It isn't to say that we don't pay attention to the risks. It isn't to say don't pay attention to the issues. But the question is, AI is coming. It's like we're in a river. It's going down. You can say, I don't like this river. I'm gonna throw my oar up, and I'm gonna yell. Like, okay. That's a really good way of navigating a river. Right? So it's like actually, it's like okay.

So how do I start steering? What do I learn? What's going on in terms of the currents and that kind of thing. And that's the kind of thing that we need to be doing as individuals, as industries, and as societies, and, obviously, of course, you know, for our audience, as companies.

Gillian: I strongly agree. And in fact, one thing I'd say is that the reason we call it artificial intelligence is because the person on the wall behind me, and I'm sitting in King's College in Cambridge, which is where Alan Turing, was based, and that's actually his portrait up on the wall behind me and literally about a 100 yards away from me is where he used to live and did much of his work. It's called artificial intelligence because almost exactly eighty years ago, Alan Turing did the Turing test and, basically spawned the word artificial intelligence.

But I often wonder how different it would feel if we called it instead augmented intelligence or accelerated intelligence because the way I see it is that it's not so much about replacement all the time, although sometimes it is, let's be honest. It's more about being an additional member of a team. And I say that because a few years ago, I gave speeches saying that there was one thing that AI could never do, which was to tell a really good joke, and therefore comedians had job security. And it turned out that I was totally wrong because the reason why I thought AI would never be able to tell a joke and challenge comedians was because the pre-transformers models of AI, which were basically path-dependent based on logic, essentially could only produce very basic, crude jokes like knock-knock, who's there jokes, or wordplay or Christmas cracker jokes, and they weren't funny. Post transformers, where, essentially, you're dealing with probabilistic observation, they can produce jokes that are funny about half the time.

And the dirty secret of humor is that actually comedy writers for late night TV shows are only funny half the time. And the reason they know that is because those jokes are written not by individuals but teams who chuck jokes into the mix and they bat them around, and they finally produce a late-night television comedy. And adding an AI agent into that mix doesn't necessarily replace the humans. It simply adds to the jokes that are basically swirling around and gives more checks and balances. And humor is fascinating because humor in many ways is the very definition of cultural anthropology, which is what I studied my own PhD and I've done academic work in because humor can't be predicted by an algorithm because it depends on contradictions in culture, on ambiguity and silences that we don't like to talk about, and very tribal behavior. So the fact that AI can now master even that but can do that by being part of a team is really important as a parable for what we might see emerge in many professions.

Parker: Yeah. Let me double click on that, Reid, because you've talked about having teams, individuals, having assistants, teams having assistants. I'd love to hear you expand on that vision for what work might look like.

Reid: So let me start with just a couple of near certainties to predict in the near future. And near future is like small number of years or medium number of months. Which is, one, there won't be such a thing as an individual contributor anymore because essentially, every person who's doing this will have a small to a large team of agents facilitating what they're doing, and they will be managing that process with those agents. That's one thing. So it's kind of like almost like the managerial skills, like, the kind of managerial skills you might exhibit today when you're using a deep research agent or other bots in order to do stuff. That's gonna get deepened.

And another one, this one actually is a prediction I made in the MIT tech review a number of years ago, is every single meeting that we do, we will actually have an AI agent, not just for transcription and notes, all of which is happening now. But where that AI agent would be going, oh, you know, Gillian and Reid are talking about this. Do you realize also you should talk about Alan Turing in the following question, or you should refer to the following thing about the Turing papers in the King's library? You know?

And so, you know, that kind of participation for information, for follow ups, for questions, you know, will then become like, it's almost like, wait, wait. Can we have this meeting? We don't have the AI agent turned on yet. We're gonna be so much less effective if the AI agent isn't here in the things we're doing. And, you know, part of this amazing transformation that we're about to go into, this is, like, only a few years into the future. And, you know, in fact, when you're doing white collar work, if you're not using AI tools, you'll be under-tooled. It'll be kinda like saying, I'm a carpenter, but I use rocks, not hammers. Or, you know, like, it's kinda gonna be a standard part of what is professional competence, and then that will spread through the entire team.

So I think this kind of massive capability change is coming so fast that you need to get engaged and you can't, like, say, we're gonna set, you know, these three people to go off and study it and come back in six to twelve months and tell us about it. I think that's too slow. So I think what you want to do is what are the ways you can experiment quickly? So, you know, simple things that you can do that I've, you know, done with, you know, organizations that I'm on the board of and others to say, well, you know, make sure that there's kind of like a weekly, biweekly, you know, fortnightly review of where everyone says, here's what I've tried, here's what I've learned, here's the things that I'm doing. Right? And then you can also similarly go, and here's some of the things that we should be doing, you know, as a group.

For example, when I'm working with my groups, one of the things I do is I take a transcript of the meeting, and I feed it in with some relatively standard prompts in AI that says, is there anything we missed? Was it an important question? Was it an important source of information? Was there a follow-up? And this set of different things because we just take the, we had the meeting, we did the transcript, we just put the transcript in, and it gives us a very quick response to that. It can even be before the meeting's over is how fast this can be, where you go, "Oh, right. Yeah. Yeah. We should do that too." And so anything to be doing to starting to be experimenting and seeing what is our company culture, our market position, the way that we operate in our groups and not just as, hey, you know, let's go assign Sue or Fred to go, you know, generate a report on this that, you know, we'll go look at in x months.

Parker: Gillian, how are you seeing those adoption, sort of steps forward, step back, step sideways? How are those playing out either in the conversations you have with leaders or even potentially within journalism and the Financial Times itself?

Gillian: Well, I wear several different hats in that I am both overseeing this college in King's College in Cambridge, where academia is potentially being very challenged by AI in many ways. Good news is that the life scientists and the other scientists that I deal with in the college are being given extraordinary wings all of a sudden to do the kind of research at speed that most have never dreamt to be possible. So they are totally positive about AI.

Many people in the humanities are pretty negative about AI because they can see that it's basically either going to undercut their role as teachers, or, in their view, make, you know, a whole generation of students pretty stupid because they're cheating with AI and not using their brains. I mean, as it happens, Cambridge and Oxford are probably the most, ChatGPT-resistant types of education in the world because they rely so heavily on small face-to-face interactions and what we call tutorials and supervisions where they have to write essays and then talk about them for an hour or two. And that is AI-resistant in many ways, or rather AI-enabled because you can use AI to research your paper, and then you're forced to discuss it as a human being, using what you've seen from AI. So I actually expect that going forward, we may well see more spoken exams, more teaching patterns of the form that we have in Oxford and Cambridge.

In terms of journalism, you know, I was actually meeting with the CEOs of most of the big British media companies yesterday and moderating a discussion with them all about this very topic. And the message is that they are very threatened by the fact that AI companies are scraping their data with no monetization or monetary reward, often no attribution. And they're basically demanding some form of compensation for journalistic content to be used to train models, which I think is entirely fair. What form it will take is unclear, but there needs to be some way to get the media ecosystem compensated. Otherwise, there will be no media ecosystem and content in the future to scrape. But when it comes to actually providing news, they're taking very different attitudes. I mean, the Financial Times is not using AI to write stories in any formal sense, maybe to do some research, but not to write stories. But it is to aggregate news headlines, for example. And I suspect you'll see a lot more of that going forward.

And as far as CEOs are concerned, as Reid says, many of them have barely started thinking about it yet, but they need to quite urgently, because if they don't, they will get overtaken. And apart from anything else, they won't actually realize, you know, how to familiarize themselves in the way they see both the benefits and the risks around it.

Parker: Reid, I wanna pick up on something Gillian mentioned, which was the tutorial model, at those, you know, Cambridge and Oxford. It's famous. It's so successful. One of the companies that you cofounded a few years ago with Mustafa Suleyman was Pi, personal intelligence. Can you expand on that vision of having this idea of personal intelligence alongside you?

Reid: So, one of the things that's another kind of startling prediction is that we will, within a small number of years this will be a little further down than the earlier predictions I made. But we will actually, when we have a kid, we will actually have them have an agent, that will go with them through their entire life, and, you know, learn and help and so on with them. By the way, we will adopt that as adults sooner because we won't have all the complexities around, well, what are what are the set of things around it around the child. And so part of that is having a essentially a companion. And part of our idea when we built Pi, you know, pun intended, but it's a personal intelligence, but, you know, apple pie, etc., is that, training for this. And in my, you know, earlier book, Impromptu, I called it amplification intelligence, although augmentation intelligence is good too.

When we're gonna be amplified, you don't just need IQ, you also need EQ. You need conversational capability. And part of that is to, is to actually be a very good, you know, kind of companion in the things you're doing. And so Pi, I think, you know, kind of set the standard for all of the other GPT4-class models on how do you put an EQ, how do you have it, you know, have it be a conversationalist and ask questions, you know, and how does it help solve a variety of those kind of, like, you know, kind of life navigation. And it applies to work too because social intelligence is part of the meeting, part of kind of collaborating with teams that was important. But, you know, that that obviously, you know, people don't necessarily think of that in its kind of top role. But that's what Inflection and Pi are about. Now we've seen other agents beginning to do, you know, like, you know, Anthropic with Claude and others beginning to develop on the same in a similar line.

Parker: How should company leaders, CEOs help their workforces navigate some of these natural threats that people will feel as they see parts of their job, not just the augmentation parts, which I think people will be excited about, but bits that they maybe spent ten, twenty years becoming an expert on, watching AI do parts of that as well as them. I think that's gonna be the crux of change management in companies.

Gillian: The really interesting for the companies right now, I think, is actually, in many ways, the entry level jobs because what AI is replacing above all else are a number of the boring entry level jobs that graduate trainees in particular would do for a couple of years to have effectively an apprenticeship in a white-collar way into the wider world of work. And by that, I mean, you know, sort of early career engineers, early career lawyers, paralegals, or in the case of journalists, you know, your classic grad trainee journalist. And when I was running, you know, bits of the FT, you know, I used to make all the new entrants do really dumb, boring stuff like write the markets column, which frankly could have been done by, you know, automation twenty years ago. But we still had people doing that, often because it was a really good training ground for learning how to handle data information.

So one of the questions is gonna be, how are we gonna train the next generation into apprenticeships and entry level jobs. The flip side of that though is that if we start talking, calling it augmented intelligence or artificial intelligence and start trying to train people how to use it to make their job more effective, we may actually start to see people not only using it to be more productive, but creating whole new categories of work that we haven't even imagined yet because that's been the story of calculators and computers.

Reid: Actually, for young people right now, part of what I advise them to do is become as expert and possible in AI and come to the organizations going, I can be part of your AI transformation. Like, I will, I will front end, I will use this too. I'm an AI native. It's this, I'm part of generation AI and doing that. I actually think that's part of how the transformation is gonna happen. And by the way, when you get to, well, how are we doing apprenticeship and so on. AI is the, like, by just many, many miles, the best educational tool we have created in human history. Right?

So the question around, like, it almost gets back to, like, we go back to what Gillian was talking about in terms of the Oxford-Cambridge model. We can now have essentially, you know, a kind of a quasi, not the same and better with an Oxford or, you know, kind of, Cambridge on. But we can have one that is interacting one on one with every individual and helping them, you know, kind of get better on, you know, kind of the way they're thinking, what they're doing. And so you say, well, we got, how do how do we get people up the curve? It's well, actually, in fact, using AI and learning from AI and then using AI to do the work is part of what I think is gonna happen. And then precisely as Gillian was gesturing, we'll start, we'll say, hey, you know, as opposed to having, you know, twenty lawyers, we only need four. But then we're gonna figure out other new things that we need to be doing or can be doing that are really good for how we do business, risk mitigation, analysis, contracts, etc. And then the work will expand in, you know, in different ways just as it has, you know, in every adoption of technology.

While there's concerns and things to navigate, the human amplification is, like, just simply amazing. We have line of sight to a medical assistant on every smartphone running at under, you know, five pounds, five dollars per hour that is there 24/7 for everyone who has access to kind of a smartphone. We have a legal assistant, a tutor, etc. All of these things are part of that kind of amplification. And, you know, how do we get there? Like, here's, I'll end with that kind of, one of the ways that I think white-collar work will be changing, which is we already have coding copilots that essentially people with engineering mindsets are using. I think every white-collar job will have a coding copilot assistant that, part of how you're doing journalism, teaching, evaluation, analysis, accounting will be actually, in fact, having an AI system that's doing coding with you in order to be accomplishing those missions.

Gillian: Yeah. The key question we face is that we know that, you know, like, any innovation can either unleash our demons or the angels of a better nature. That applies to electricity. It applies to guns. It applies to nuclear power. It applies to anything that we've created. And if we look at social media, the reality is it unleashed our demons for the most part. They overwhelmed our angels of our better nature. I do think that AI, agentic AI, does have ways to potentially unleash our angels, and the question really is how. And I would argue simply, to be totally biased, that mixing artificial intelligence or accelerated intelligence with anthropology intelligence, i.e., a sense of our own humanity, the other AI, is one way to go.

Parker: I think that's a terrific ending, a terrific inspiration. The mission of our time is to ensure that we steer AI's adoption by humanity to unleash our better angels. What a terrific conversation. Reid, Gillian, thank you both so much for making the time to join us. We really appreciate it.

We sat down with LinkedIn co-founder Reid Hoffman and Financial Times editorial board chair Gillian Tett to go beyond the headlines and get a deeper understanding of the economic and workforce impact of AI. Their message for HR leaders: act now, because the change is already here.

1. AI is here to amplify human potential. Instead of focusing on AI as a way to cut costs or reduce headcount, Reid and Gillian see AI as augmentative: a new member of the team that changes workflows and unlocks capacity for human creativity.

2. "AI is the best educational tool we have created in human history," Reid says. There are real challenges around AI's impact on how young people learn and develop the skills they need to enter the workforce. But Reid and Gillian explore how AI can create new models of education and training that personalize instruction in a way that was previously only possible at the most prestigious universities.

3. With AI, everyone becomes a manager. Reid sees a near future where every employee has a team of AI assistants that they manage to get work done. "There won't be such a thing as individual contributors anymore." In this world, the same EQ skills that make people great managers and coworkers become the skills that make them AI super-users .

4. "If you're not using AI, you're going to be under-tooled," says Reid. From leveraging AI to run better meetings to reimagining what's possible to achieve with an AI assistant at every employee's side, Reid and Gillian outline concrete starting points for driving change at scale. Because, as Reid says, in six months, if AI isn't embedded in your workflows, "It'll be like saying, 'I'm a carpenter, but I use rocks, not hammers.'"

Co-Founder at LinkedIn, Manas AI, & Inflection AI

Editorial Board Chair, Financial Times

What’s the most overused word when it comes to AI? According to Geoffrey Hinton, one of the inventors of the modern LLM, it’s hype and AI is under, not overhyped. He explains the power of AI coaches and assistants in healthcare, education, and the workplaces and what leaders most need to understand about the technology.

Nobel Laureate, "Godfather of AI"

Parker Mitchell: When we last chatted in, I think it was November or maybe October, you were two weeks into the university giving you a personal assistant. Can you share more what it's been like having that and we may be able to extrapolate to everyone having the equivalent of that?

Geoffrey Hinton: So fairly recently, I woke up earlier than usual. And up until that point, I've been thinking, maybe I don't need the personal assistant anymore because when I look in my mailbox, there's only about sort of things to be dealt with. But the morning I woke up early, I discovered there were hundreds of things to be dealt with because my personal assistant was just dealing with them. That was kind of essential.

Parker: And when you look at how she has learned about you and the way you might answer questions or how you would assess a situation, what has it been like if she's gotten to know you personally better?

Geoffrey: It's been good. She's getting much better at knowing which questions I want to answer myself, which talks I might be interested in giving, and which talks I'm definitely not interested in giving. She can pretty much recognize my former students.

To begin with, one of my former students would send me mail, and they'd get a very polite answer saying I was busy. And I remember talking to students. I got this answer from you. Didn't sound like you. And so now I tell my students, if you ever you get a really polite answer, that's not me.

Parker: Don't have time to write the full polite answer. And so in some ways, she's acting as your proxy. She's learned how you see the world and is placing that as a first filter. How would AI develop that ability to do the proxies to help people navigate how work and life might change as AI is able to automate more things? Do you see a world where people will have many different specialized assistants or just one that knows them? Any thoughts on that?

Geoffrey: It's a very good question. Why do you need a train one being neural net to do everything? Because that's more efficient in the long run, because you can share what different tasks have in common. So there's always this tension between, having a small neural net specialized to one thing, which doesn't have much training data. If you got enough training data, that's a sensible thing to do. And so we have huge amounts of training data.

It's quite sensible to have many small neural nets, each of which is only trained on a tiny fraction of the training data, and a manager who decides which neural net should answer each question. If you don't have that much training data, it's typically better to have one neural net that's learned on all the training data. And then maybe after you trained on all the training data, you might fine tune it to be a specialist in different domains, and that seems to be a good compromise. Train one neural net on everything, and then in particular domains fine tune it for that domain.

Parker: I mean, it sounds like if I look through the history, people said, you know, it might do this, but it won't do a, b, c. And I think your answer sounds like it could be just a matter of time and scale, maybe data.

Geoffrey: Go back ten years and take anything that people said it couldn't do. It's now doing it.

Parker: And so if we fast forward now ten years in the future, obviously, the implications for society are huge. But on the positive use cases, health care is one. Tell us a little bit about how why that is so personally important to you and how that could evolve over the next, let's say, five years.

Geoffrey: What a family doctor does, the sort of first line. The family doctor knows quite a bit about you, maybe knows something about your family, maybe even knows a few things about your genetics. But she's only seen a few thousand patients. I mean, almost certainly, she's seen less than a hundred thousand patients in her life. There just isn't time. An AI doctor could have seen the data on millions of patients, hundreds of millions of patients, and so and also could know about a lot about your genome, a lot about how to integrate information from the genome with information from tests. So you're gonna get much better family doctors with AI. And we're gonna get all sorts of things like that for CAT scans and MRI scans, where AI can see all sorts of things that current doctors don't know how to see.

Parker: I brought up that example to a doctor who had looked at the interaction between radiologists and AI, and there were a few different scenarios.

So one is, you know, AI is confident and the doctor is confident. Same diagnosis, obviously easy.

Geoffrey: But not, I would trust the AI.

Parker: So they were doing a study on this. But what was interesting is if a doctor is, you know, confident it's x and AI is confident it's y, the doctor chooses to go with their own diagnosis.

Geoffrey: Fair enough.

Parker: Now if the doctor is not confident and the AI is also not confident, the doctor chooses the AI solution with the sort of human thinking of, like, well, if I'm not sure, I'll blame it on the AI for being wrong. I just thought the human nature of that feels so real and dangerous at the same time.

Geoffrey: Yeah. I think that's telling us more about human nature than about what the optimal strategy is.

Parker: Absolutely. And ways that we might misuse AI in the human-AI interaction.

Geoffrey: The thing I know a bit more about from a paper that's more than a year ago now is you take a bunch of cases that are difficult to diagnose. So this isn't scans. This is, you're given the description of the patient, and the test results. And on these difficult cases, doctors, get 40% of them right, an AI system gets 50% of them right, and the combination of the doctor and the AI system gets 60% right.

And if I remember right, the main interaction is that, the doctor would often make mistakes by not thinking about a particular possibility, and the AI system will raise that possibility. It'll have a list of possibilities. And when the doctor sees that possibilities, the doctor will say, oh, yeah. The AI system is right there. I didn't think about that. That's one way in which the combination works much better. The AI system, doesn't fail to notice things in the same way a doctor often does. But there, it's already the case that, and this was more than a year ago, with the combination of AI system and doctor, it's much better doing diagnosis than the doctor alone.

Parker: And what it sounds like the AI is doing is generating a scenario-specific checklist. Here are a range of different things, and it could do that very quickly, and a doctor can just look at that and go, no. No. No. Oh, maybe this. And it sort of allows it to do a little more system one intuition on those and then pay more attention to the ones that it thinks is important versus difficult system two thinking across every possibility.

Geoffrey: Yeah. So that's certainly one of the things that's going on. The other thing that's going on, of course, is you get the ensemble effect. If you have two experts who work very differently and you average what they say, you'll do better then.

Parker: Anything that's processing vast amounts of data, finding patterns and similarities, and then identifying sort of promising candidates, for humans in that sort of collaborative model you mentioned, that's gonna power things.

Part of that leads to my next topic, which is around personalization. And so we're in a, we'll be in a world where your biology is different from mine, it's different from someone else's. And so that intervention on the medical side can be more tailored to each of us. Is there research currently going on around, how that might, you know, how that might change sort of health outcomes?

Geoffrey: I believe there is. I don't know as much as I should about this. But for example, in cancer, you'd like to use your own immune system to fight it, and you'd like to sort of help your immune system recognize the cancer cells. And there's many ways of doing that. I think AI is already being used to choose which things to mess with.

Parker: Are most likely to work for your particular area.

Geoffrey: So that would be individual therapy based on AI. And then, obviously, in education, AI is gonna be very useful. And, again, it's gonna be individual therapy for misunderstandings. An AI system that's seen thousands or millions of people learning about something and there are different ways in which different people misunderstand, that will be very good at recognizing for an individual person, oh, they're misunderstanding in this way. It's what a really good teacher can do. They're misunderstanding this way, and here's an example that will make it clear to them what they're misunderstanding.

AI is gonna be very good at that, and we're gonna get much better tutors. We're not there yet, but we're beginning to get there. And I I'm now happy to predict that in the next ten years, we'll have really good AI tutors. I may be wrong by a factor of two, but it's gonna, it's coming.

Parker: You mentioned on the AI tutor side of things for students. I think there was a study that you referenced about how much better the outcome is when people get individualized tutors.

Geoffrey: Yeah. I can't, I don't have the citation for it, but the number I remember quite well, and I've seen it quoted elsewhere too, which is you learn about twice as fast with a tutor as in a classroom. And it's kind of obvious why. First of all, you don't, your attention doesn't lapse. You're interacting with somebody, so your attention stays on it. You don't just stare out the window and wait 'til the lesson ends. I spent a lot of my time at school doing that.

Secondly, the person's attending to you and can see what you're getting wrong and give you, correct it. And in a classroom, you can't do that. So it's sort of obvious why a human tutor is gonna be much more efficient than a classroom. An AI tutor should be better than a human tutor eventually. Right now, it's probably worse, but getting there. And so my guess is it will be three or four times as efficient once we have really good AI tutors because they would have seen so much more data.